From Fork to Framework, Part 2: The Python and Svelte Rewrite

Our second iteration replaced the forks with a clean-room build using FastAPI and SvelteKit. Feature velocity was excellent. Operational complexity was not.

Starting From Scratch (Almost)

On December 16, 2025 – four days after we stopped active development on the LibreChat and LiteLLM forks – we created a new repository and began writing what we internally called “v2.” The goal was simple in statement and ambitious in scope: rebuild everything we had learned from the fork period as a clean-room implementation, without inheriting anyone else’s architectural decisions.

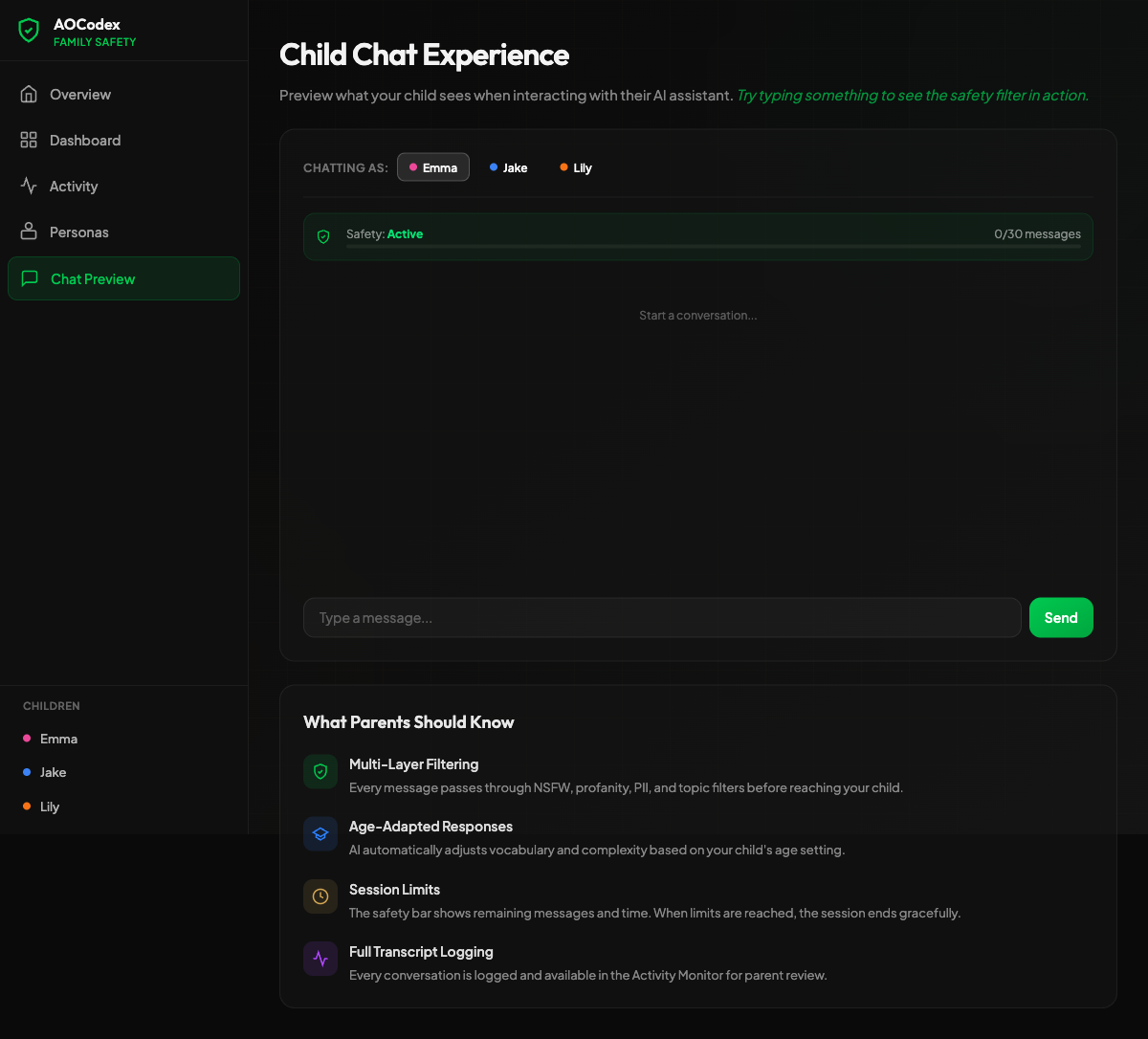

Over the next eight weeks, through February 13, 2026, we shipped 242 commits. We built a credit-based billing system, a persona engine, web search integration across three providers, a knowledge base with vector embeddings, memory extraction with entity relations, real-time collaboration, OAuth authentication, admin dashboards, and an early demo of family parental controls. We also learned, the hard way, that feature velocity and operational simplicity are not the same thing.

The Stack

We chose Python and Svelte for complementary reasons. Python gave us direct access to the machine learning ecosystem – every AI library, every embedding model, every NLP toolkit ships Python bindings first. Svelte gave us a reactive frontend with less boilerplate than React, a compiler-first architecture that produced smaller bundles, and SvelteKit’s file-based routing that made page creation trivially fast.

The full stack:

- Frontend: SvelteKit 2 + Flowbite Svelte Pro + Tailwind CSS 4

- Backend: FastAPI (Python 3.11+) with async/await

- ORM: SQLAlchemy 2.0 with SQLModel

- Database: PostgreSQL 16 with pgvector for embeddings

- Cache and pub/sub: Redis 7

- Background tasks: Celery with Redis broker

- Real-time: Socket.io

- AI providers: LiteLLM (as a library, not a fork)

- Deployment: DigitalOcean App Platform

The Architecture

Compared to the fork-based v1, this architecture had one clear advantage: we owned every line of application code. There were no upstream merges to dread, no rebranding to maintain, no foreign opinions about database schema design embedded in our models. The application layer was ours.

The infrastructure layer, however, had grown. Where v1 relied on MongoDB, PostgreSQL, and Redis, v2 required PostgreSQL (with pgvector), Redis (for both caching and Celery’s message broker), Celery workers, and a Socket.io server. Four infrastructure dependencies, each with its own failure modes, scaling characteristics, and operational overhead.

What We Built

The Credit System

We replaced Stripe subscription tiers with a credit-based billing model. Users purchased credits, and each AI interaction deducted a calculated cost based on the model, token count, and operation type. This was more flexible than flat-rate subscriptions – a user who sent ten messages a month paid less than a power user who sent ten thousand – and it mapped cleanly to our own costs, since AI providers charge per token.

SQLModel made the credit ledger straightforward: a CreditTransaction model with debit and credit entries, linked to users and conversations. The async SQLAlchemy 2.0 session gave us non-blocking database access inside FastAPI’s event loop, which mattered when a single chat response involved multiple database writes (deducting credits, storing the message, updating conversation metadata, logging the AI provider response).

Personas and Web Search

Personas let users configure system prompts, model preferences, temperature settings, and tool access as reusable profiles. A “Research Analyst” persona might use Claude with web search enabled and a low temperature; a “Creative Writer” persona might use GPT-4 with a high temperature and no tools.

Web search was integrated through three providers: Serper, Exa, and Tavily. Each returned different result formats, so we built a normalization layer that produced a consistent SearchResult schema regardless of provider. The AI model received search results as structured context, and the frontend rendered source citations inline.

Knowledge Base and Memory

The knowledge base used pgvector to store document embeddings in PostgreSQL. Users could upload documents, which were chunked, embedded (using OpenAI’s embedding models via LiteLLM), and stored as vectors alongside their source text. During conversation, relevant chunks were retrieved via cosine similarity and injected into the model’s context window.

Memory extraction went further: after each conversation, a background Celery task analyzed the exchange and extracted entity-relation triples. “The user’s daughter is named Sarah” became a structured relation (user -> daughter -> Sarah) stored in a dedicated memory table. These relations were retrieved in future conversations to give the AI persistent knowledge about the user – a feature that proved especially compelling in our family safety demos.

Real-Time Collaboration and Admin

Socket.io powered real-time features: streaming AI responses token-by-token to the frontend, live presence indicators showing which team members were online, and collaborative conversation viewing. The Socket.io server ran as a separate process, communicating with FastAPI through Redis pub/sub.

The admin dashboard, built with Flowbite Svelte Pro’s component library, provided user management, credit balance oversight, usage analytics, and system health monitoring. Flowbite’s pre-built tables, charts, and form components accelerated this work considerably – the admin UI was functional within a week.

The Supabase Detour

We initially used Supabase for authentication and real-time subscriptions, attracted by its managed PostgreSQL, built-in auth, and row-level security. A related experiment, eden-app-framework – a SvelteKit + Supabase + FastAPI + Tauri v2 concept for a desktop app – received exactly one commit on December 25 before we recognized it was a distraction.

Supabase itself became a problem for a different reason: we were running FastAPI with its own auth system alongside Supabase’s auth, creating two sources of truth for user identity. JWT tokens from Supabase needed to be validated in FastAPI middleware, but our credit system, admin roles, and team memberships lived in FastAPI’s database. The impedance mismatch produced bugs that were subtle and time-consuming to diagnose.

We migrated to FastAPI-native authentication – OAuth providers wired directly into our SQLAlchemy user model – and the entire auth surface area simplified. Supabase is an excellent product for teams that build on top of it; it is a less natural fit when your backend already has its own opinions about data and identity.

What Python and Svelte Gave Us vs. What It Cost Us

| Advantage | Cost |

|---|---|

| Direct access to ML/AI ecosystem – every library is Python-first | GIL limits true concurrency; async helps I/O but not CPU-bound work |

| FastAPI’s automatic OpenAPI docs and Pydantic validation | Python’s runtime type system means errors surface at runtime, not compile time |

| SvelteKit’s compiler-first approach produces small, fast bundles | Svelte’s ecosystem is smaller than React’s; fewer off-the-shelf components |

| Flowbite Svelte Pro accelerated admin UI development | Commercial component library created a licensing dependency |

| SQLAlchemy 2.0 async is mature and well-documented | SQLModel’s convenience layer occasionally fights SQLAlchemy’s more advanced features |

| pgvector keeps embeddings in PostgreSQL – no separate vector DB | Vector search performance degrades at scale compared to purpose-built solutions |

| Rapid prototyping – 242 commits in 8 weeks with a small team | Four infrastructure dependencies (PostgreSQL, Redis, Celery, Socket.io) multiply operational surface area |

| LiteLLM as a library gave us provider abstraction without the fork tax | Still a Python dependency with its own release cadence and breaking changes |

| Tailwind CSS 4 utility classes kept styling consistent | Tailwind + Flowbite + SvelteKit + custom theme = four layers of styling abstraction |

| DigitalOcean App Platform simplified initial deployment | App Platform’s constraints became visible as we needed WebSocket support, background workers, and custom networking |

The Operational Complexity Problem

Feature velocity was genuinely excellent during this period. SvelteKit’s file-based routing meant a new page was a new file. FastAPI’s dependency injection made adding authenticated, validated endpoints mechanical. SQLModel reduced ORM boilerplate. The team was shipping product features every day.

But each feature added operational weight. The credit system needed Celery tasks to reconcile balances. Memory extraction needed Celery tasks to run after conversations. Web search needed Redis caching to avoid redundant API calls. Real-time collaboration needed Socket.io, which needed Redis pub/sub, which needed careful connection management to avoid memory leaks.

By late January 2026, our local development environment required: PostgreSQL, Redis, a Celery worker, a Celery beat scheduler, the FastAPI server, the Socket.io server, and the SvelteKit dev server. Seven processes. On DigitalOcean App Platform, this translated to multiple services with their own scaling, health checks, and log streams.

The infrastructure was not unmanageable – teams run far more complex stacks in production every day. But for a small team building a product that was still finding its market, the ratio of infrastructure management to feature development was trending in the wrong direction. Every hour spent debugging a Celery task that silently failed because Redis hit its connection limit was an hour not spent on the product.

Python’s Concurrency Story

FastAPI’s async/await model handled I/O-bound work well. Waiting for AI provider responses, database queries, and Redis operations all yielded cleanly to the event loop. But Python’s Global Interpreter Lock meant that CPU-bound work – embedding generation, PII detection with Presidio, entity extraction from conversation text – blocked the event loop or required offloading to Celery.

Celery is a proven solution for background task processing. It is also a distributed system with its own failure modes: tasks can be lost if the broker restarts, retry logic needs careful configuration, and monitoring requires additional tooling (Flower, custom health checks). For a product that needed to process every conversation in near-real-time, the Celery dependency felt increasingly heavy.

The Frontend Question

SvelteKit 2 was a pleasure to develop with. The compiler catches classes of bugs that React surfaces only at runtime. Reactivity is built into the language rather than bolted on through hooks. Server-side rendering and hydration work out of the box.

However, our product roadmap included native mobile apps and eventually a desktop client. SvelteKit produces web applications. While frameworks like Capacitor or Tauri (hence the eden-app-framework experiment) can wrap web apps in native shells, the result is a web view that looks and feels like a web view. Users notice. Our family safety product needed to feel native on iOS and Android – parental controls that feel like a website do not inspire confidence.

Flowbite Svelte Pro, while high-quality, compounded this concern. It is a web component library. Its components do not translate to native mobile. Choosing it for our admin dashboard was fine; building our entire product UI on it meant every component would need to be rebuilt when we moved to a cross-platform framework.

The Decision

By mid-February 2026, the Python and Svelte rewrite had proven two things:

We knew what our product was. The credit system, personas, web search, knowledge base, memory, and real-time collaboration were not experiments anymore. They were the product. The fork period identified the features; the rewrite validated them.

We knew what our stack needed to be. We needed a backend language with real concurrency – not async-over-GIL, but true parallel execution for CPU-bound AI workloads. We needed a frontend framework that compiled to native mobile, native desktop, and web from a single codebase. And we needed to own our component library so that our UI was not coupled to a commercial dependency.

The Python and Svelte rewrite was not a failure. It was the iteration that clarified our requirements with enough specificity to make the right long-term choice. Two hundred and forty-two commits in eight weeks is not wasted work – it is a thorough, production-tested requirements document.

What came next was an evaluation of the frameworks that could meet those requirements. One of them nearly won.

That is Part 3.