From Fork to Framework, Part 3: Why Rails Almost Won

Rails 8 eliminated Redis, gave us conventions for everything, and let us ship serious security features fast. Here is why we still moved on.

In February 2026, we moved both AOCodex and AOSentry to Rails 8. The appeal was immediate and substantial. After two iterations, one built on forks and one on Python and Svelte, we had a clear picture of what we needed: fewer dependencies, stronger conventions, and a framework that made the common patterns invisible. Rails 8 delivered on all three counts. For four weeks, it felt like we had found the answer.

This is the story of 286 commits, a shared Rails engine, a genuinely excellent security platform, and the architectural ceiling we eventually hit.

The Zero-Redis Stack

Rails 8.1.2 running on Ruby 4.0.1 introduced what the Rails team calls the Solid trifecta: Solid Queue for background jobs, Solid Cache for caching, and Solid Cable for WebSocket pub/sub. All three back directly onto PostgreSQL. No Redis. No Sidekiq. No separate cache cluster.

This was a significant architectural simplification. Our Python stack had required PostgreSQL, Redis, Celery workers, and Socket.io. Our fork-based stack inherited whatever dependencies the upstream projects demanded. Rails 8 collapsed all of that into a single infrastructure dependency. One database. One connection pool. One thing to monitor, back up, and scale.

The operational benefits were immediate. Deployment configurations shrank. Local development setup went from a multi-service Docker Compose file to rails server. The cognitive overhead of reasoning about cache invalidation across Redis and PostgreSQL disappeared because they were the same system. For a small team building fast, this mattered more than any benchmark.

Eden App Platform: Shared Infrastructure in Two Commits

On February 22, we created eden-app-platform, a shared Rails engine that provided the foundation for both AODex and AOSentry. In two commits, we had multi-tenancy with tenant isolation at the database query level, role-based access control with hierarchical permissions, immutable audit logging, and Stripe subscription synchronization.

Rails engines made this possible. An engine is a miniature Rails application that can be mounted inside a host application, sharing models, controllers, and views while maintaining its own namespace. Eden App Platform shipped as a gem. Both products declared it as a dependency, ran the install generator, and inherited a complete multi-tenant SaaS foundation.

We also built Eden UI, a Rails gem providing Stimulus and Tailwind-based components. The design language drew from Flowbite-Svelte concepts we had used in the Python iteration, but rebuilt as server-rendered partials with progressive enhancement through Stimulus controllers. No JavaScript build pipeline. No node_modules. Components rendered on the server, enhanced in the browser.

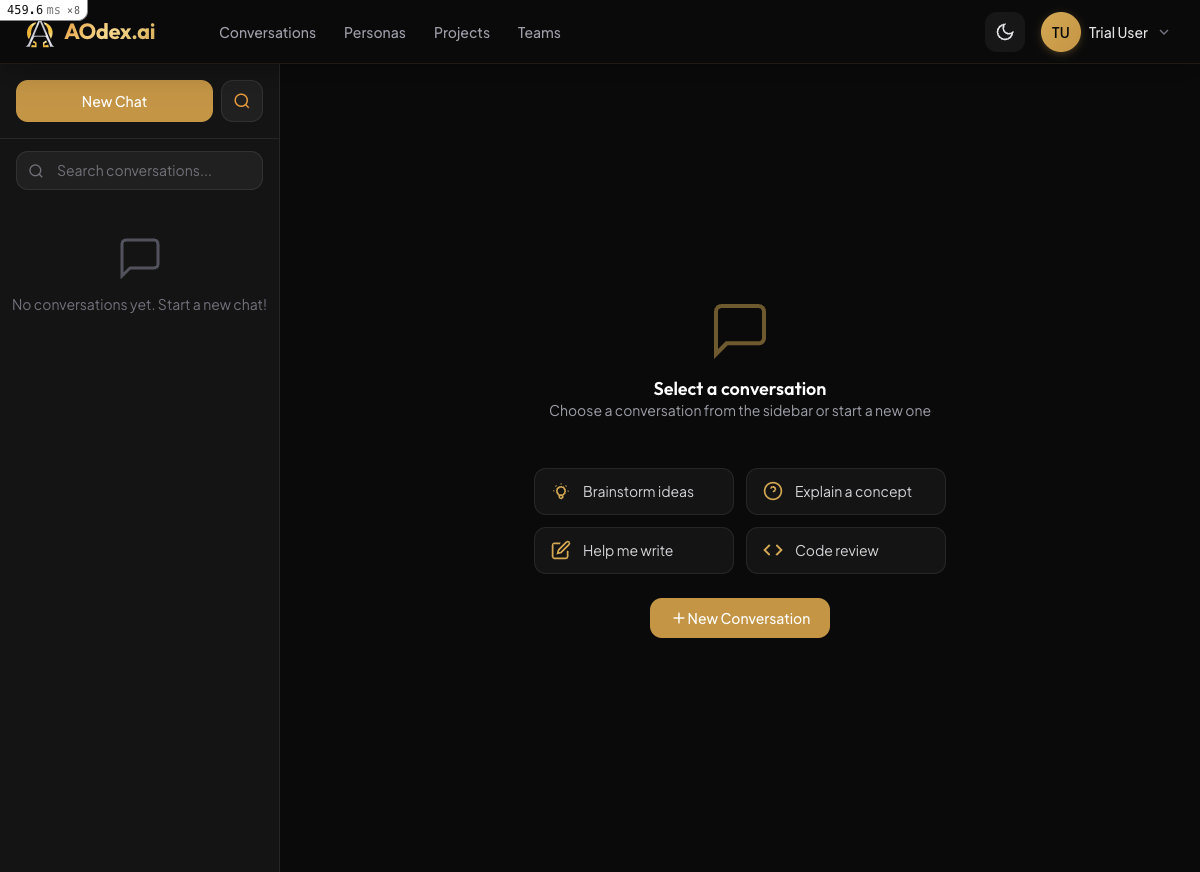

AODex: The Brief Beginning

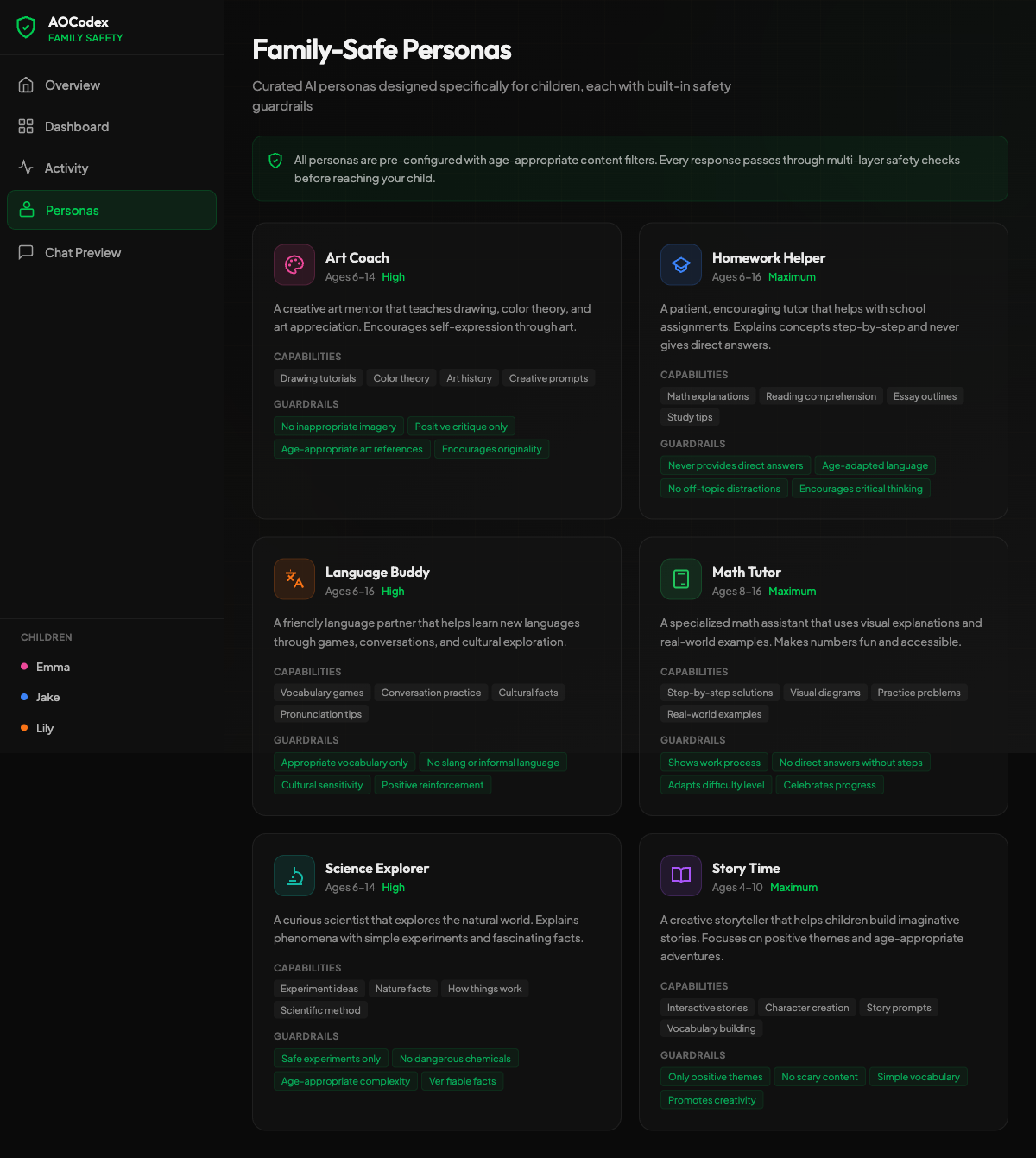

AODex started its life as aocodex-rails with 9 commits across three days, February 14 through 17. It was a straightforward port of the Python AOCodex feature set into Rails conventions: ActiveRecord models, Devise authentication, Pundit authorization, Hotwire for real-time updates.

After that initial sprint, we renamed it to aodex and continued building. Over the following weeks, it accumulated 166 commits. Hotwire and Turbo Frames gave us instant admin navigation without writing custom JavaScript. Form submissions, table filters, and modal dialogs all happened over the wire, with the server rendering HTML fragments and Turbo swapping them into the page. The developer experience was remarkably fast. Changes to a view were visible on the next request with no compilation step, no hot-reload lag, and no client-side state to debug.

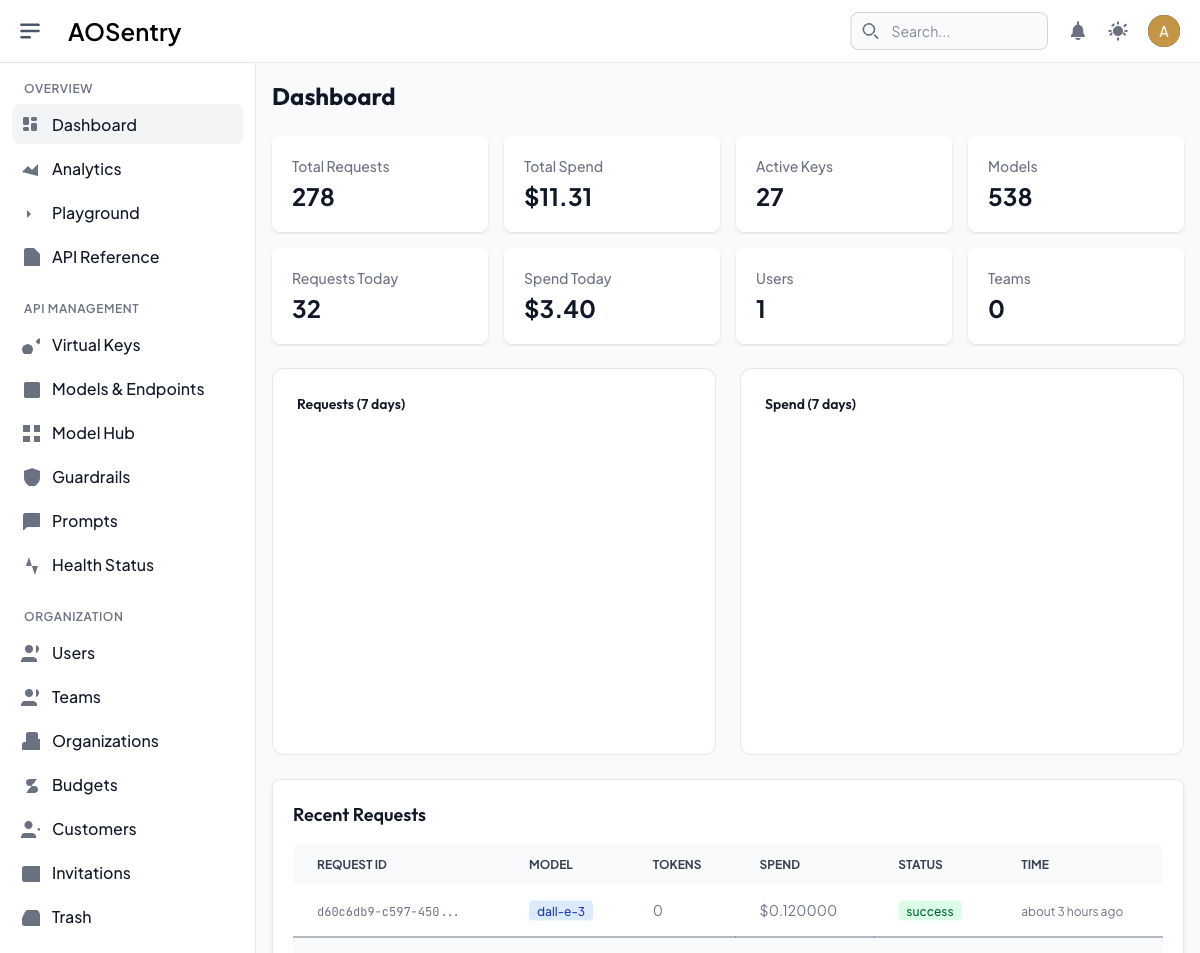

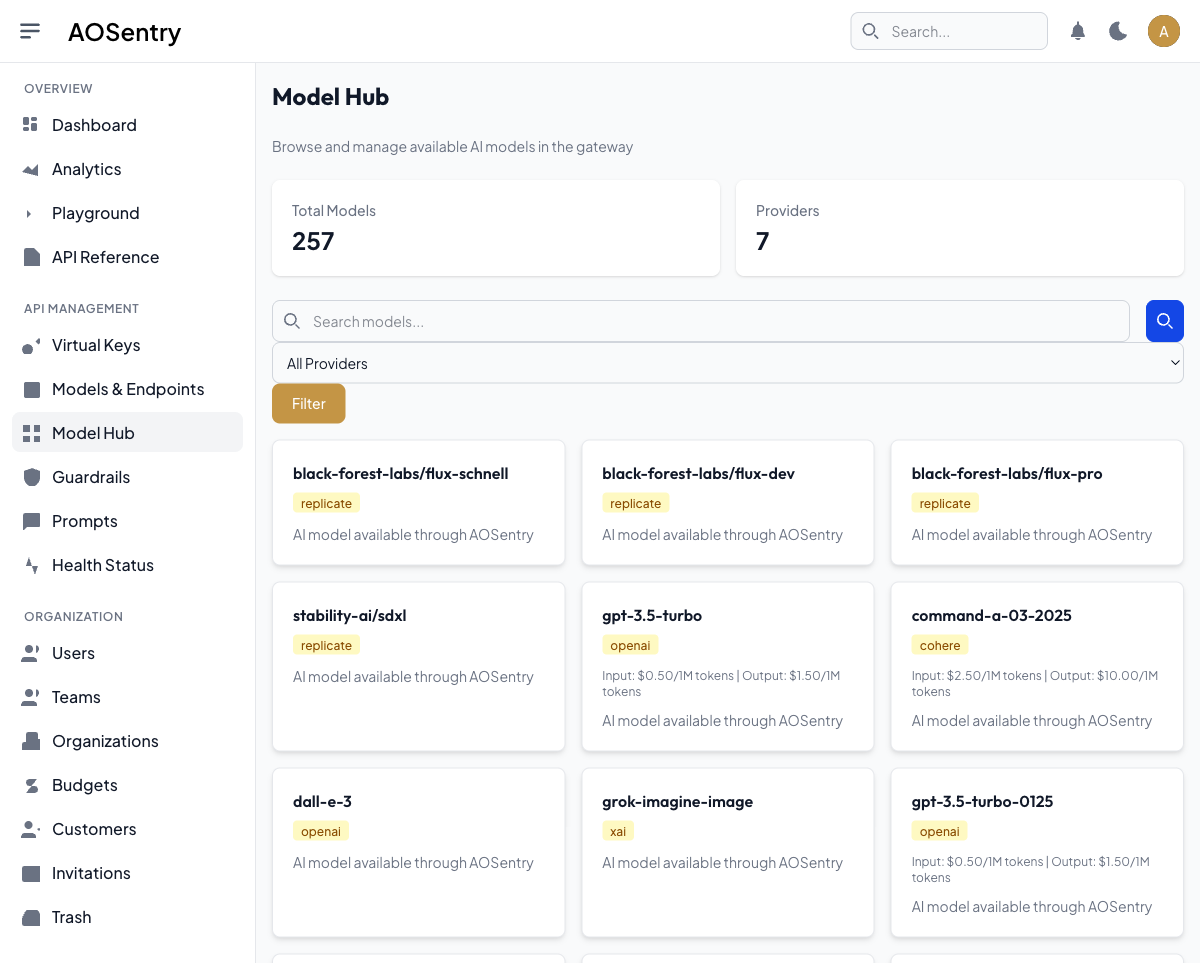

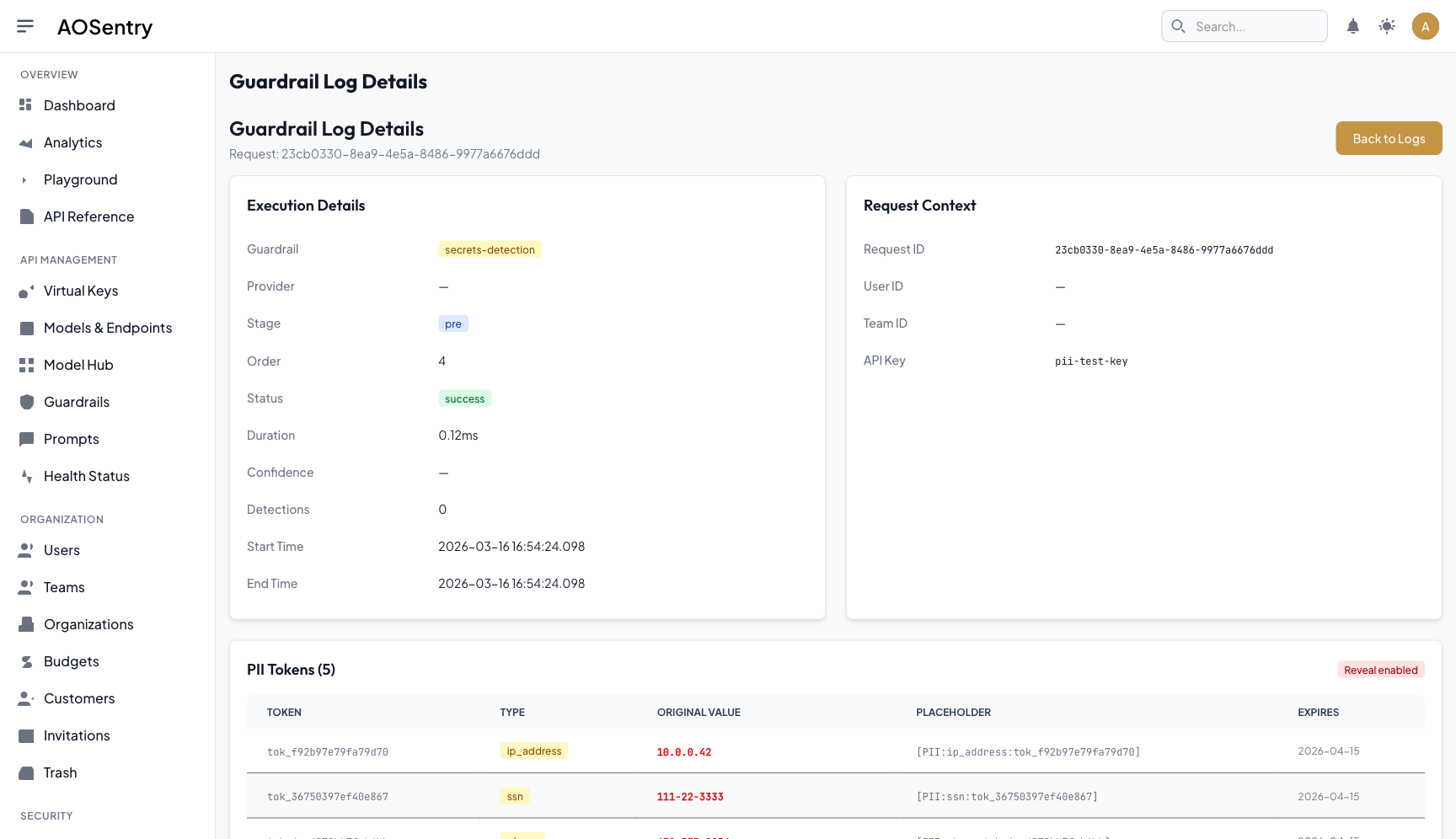

AOSentry Rails: The Deep Exploration

AOSentry was where Rails truly demonstrated its strengths. Over 120 commits, we built a comprehensive security and compliance platform that would have taken significantly longer in any other framework.

ActiveRecord encryption with per-tenant PII keys gave us field-level encryption with key rotation built in. Devise handled authentication, but we extended it with JWKS-backed JWT validation for API consumers and SAML crypto verification for enterprise SSO. Pundit policies enforced authorization at every controller action.

The compliance features came together quickly. GDPR data export generated complete tenant data packages on demand. Immutable audit logs used SHA-256 hash chains, where each entry’s hash incorporated the previous entry’s hash, making tampering detectable. Anomaly detection flagged unusual access patterns. Compliance reports aggregated audit data into formats that enterprise customers could hand directly to their auditors.

We built IP allowlisting, consent management with versioned consent records, and dual-approval change workflows where sensitive configuration changes required sign-off from two authorized users before taking effect. The eden_llm gem abstracted LLM provider interactions behind a unified interface, handling streaming responses, token counting, and provider-specific error mapping.

Every one of these features benefited from Rails conventions. Migrations kept the schema versioned. Callbacks kept the audit trail consistent. ActiveJob dispatched background work through Solid Queue without any additional configuration. The framework did not fight us on any of it.

The Architecture

Both applications backed onto a single PostgreSQL instance. Solid Queue, Solid Cache, and Solid Cable all stored their state in PostgreSQL tables. Redis was gone entirely. The only infrastructure dependency was the database itself.

What Worked and What Did Not

| What Rails Excelled At | Where Rails Did Not Fit |

|---|---|

| Convention-driven development: authentication, authorization, mailers, jobs, and admin interfaces all had established patterns | LLM streaming concurrency: Puma workers at ~150MB each cannot sustain thousands of simultaneous streaming connections |

| ActiveRecord encryption with per-tenant PII keys and built-in key rotation | Mobile targets: Rails produces HTML; it cannot compile to iOS and Android without a separate frontend |

| Shared engines for multi-tenant SaaS foundations (eden-app-platform shipped in 2 commits) | Container image size: 600MB+ images meant slow deploys and high cold-start latency on DigitalOcean App Platform |

| Hotwire and Turbo Frames for zero-JavaScript interactive admin interfaces | Thread-per-request model: Ruby’s GIL limits true parallelism to one thread per process |

| Solid Queue, Solid Cache, and Solid Cable: zero-Redis infrastructure | Type safety: runtime method resolution means entire classes of errors surface only in production |

| Rapid compliance feature development: GDPR export, audit logs, consent management built in days | Memory per connection: each Puma worker reserves substantial memory whether or not it is actively serving a request |

| Mature ecosystem: Devise, Pundit, ActiveRecord encryption are battle-tested and well-documented | Deployment density: 600MB containers on shared infrastructure limit how many instances fit on a single node |

The Concurrency Ceiling

The core tension was straightforward. An LLM gateway proxies streaming responses from upstream providers to downstream clients. Each request holds an open connection for seconds to minutes while tokens stream through. Under load, a Rails application with Puma needs one worker per concurrent connection, and each worker consumes roughly 150 megabytes of memory.

At a thousand concurrent streaming connections, that is 150 gigabytes of memory for the application layer alone. This is not a Rails bug. It is a consequence of the thread-per-request model that Ruby’s runtime enforces. Puma can run multiple threads per worker, but the Global Interpreter Lock means only one thread executes Ruby code at a time per process. For CPU-light, IO-heavy streaming workloads, this is the worst possible tradeoff: you pay the memory cost of many workers but cannot actually parallelize the work.

We confirmed this through load testing. At moderate concurrency, Rails handled streaming responses without issue. As concurrent connections climbed, memory consumption grew linearly and response latency degraded. The Solid Cable WebSocket layer, backed by PostgreSQL polling, added latency compared to dedicated WebSocket servers. None of this was surprising. It was the expected behavior of a framework designed for request-response web applications handling long-lived streaming connections.

The Mobile Gap

The second limitation was mobile. Rails renders HTML. It can serve a JSON API that a mobile application consumes, but it cannot produce the mobile application itself. Our product roadmap included iOS and Android clients. Building those in Swift and Kotlin while maintaining a Rails web frontend and a Rails API would have meant three separate UI codebases, three sets of UI components, and three deployment pipelines.

We had already experienced this fragmentation in the fork phase, where the React frontend and Python backend were separate worlds with a JSON contract between them. Rails improved the web experience with Hotwire, but it did not solve the cross-platform problem. We needed a single UI framework that could target web, iOS, Android, macOS, and Linux from one codebase.

The Deployment Reality

Container images for our Rails applications exceeded 600 megabytes. Ruby, Bundler, native gem extensions, asset precompilation, and the Rails framework itself all contributed. On DigitalOcean App Platform, this meant slow image pulls, slow scaling events, and meaningful cold-start latency when new instances launched.

Deployment was not broken. It worked. But every scaling event carried a time penalty that compounded under load, precisely when fast scaling mattered most. For a product that needed to handle bursty LLM traffic patterns, where a single enterprise customer could spike concurrent connections by an order of magnitude, slow scaling was a real operational constraint.

The Lesson

Rails is a genuinely excellent framework for building web applications. The four weeks we spent with it were among the most productive of the entire project. Eden App Platform, the security features in AOSentry, the compliance infrastructure, the audit logging system: all of it came together faster in Rails than it would have in any other framework we evaluated.

The decision to move on was not a judgment on Rails. It was a recognition that our product was not purely a web application. It was an LLM streaming gateway that needed to sustain thousands of concurrent connections at low memory cost, packaged as a cross-platform application that needed to run on phones and desktops alongside the browser. Rails excels at the web application pattern. LLM streaming gateways and cross-platform mobile are not web applications.

Every line of business logic we wrote in Rails, every migration, every policy class, every audit log schema, transferred directly to the next iteration. Rails taught us what our architecture needed to look like. Go and Flutter would provide the runtime characteristics to make it work at scale.